Invited Talks

Extreme PyTorch: Inside the Most Demanding ML Workloads—and the Open Challenges in Building AI Agents to Democratize Them

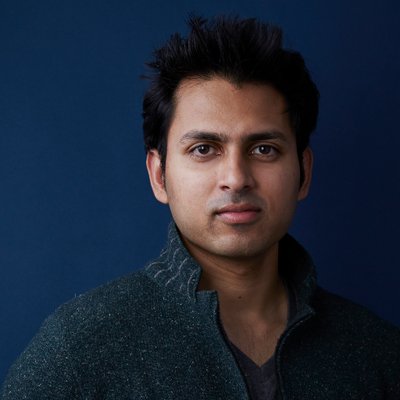

About the speaker Soumith Chintala

Abstract

Lessons Learned from Successful PhD Students

About the speaker Tim Dettmers

Abstract

LMArena: An Open Platform for Crowdsourced AI benchmarks

About the speaker Wei-Lin Chiang

Abstract

Designing Models from the Hardware Up

About the speaker Simran Arora

Abstract

An AI stack: from scaling AI workloads to evaluating LLMs

About the speaker Ion Stoica

Abstract

Hardware-aware training and inference for large-scale AI

About the speaker Animashree Anandkumar

Abstract

Responsible Finetuning of Large Language Models

About the speaker Ling Liu

Abstract

No Events Found

Try adjusting your search terms